UI Inspection

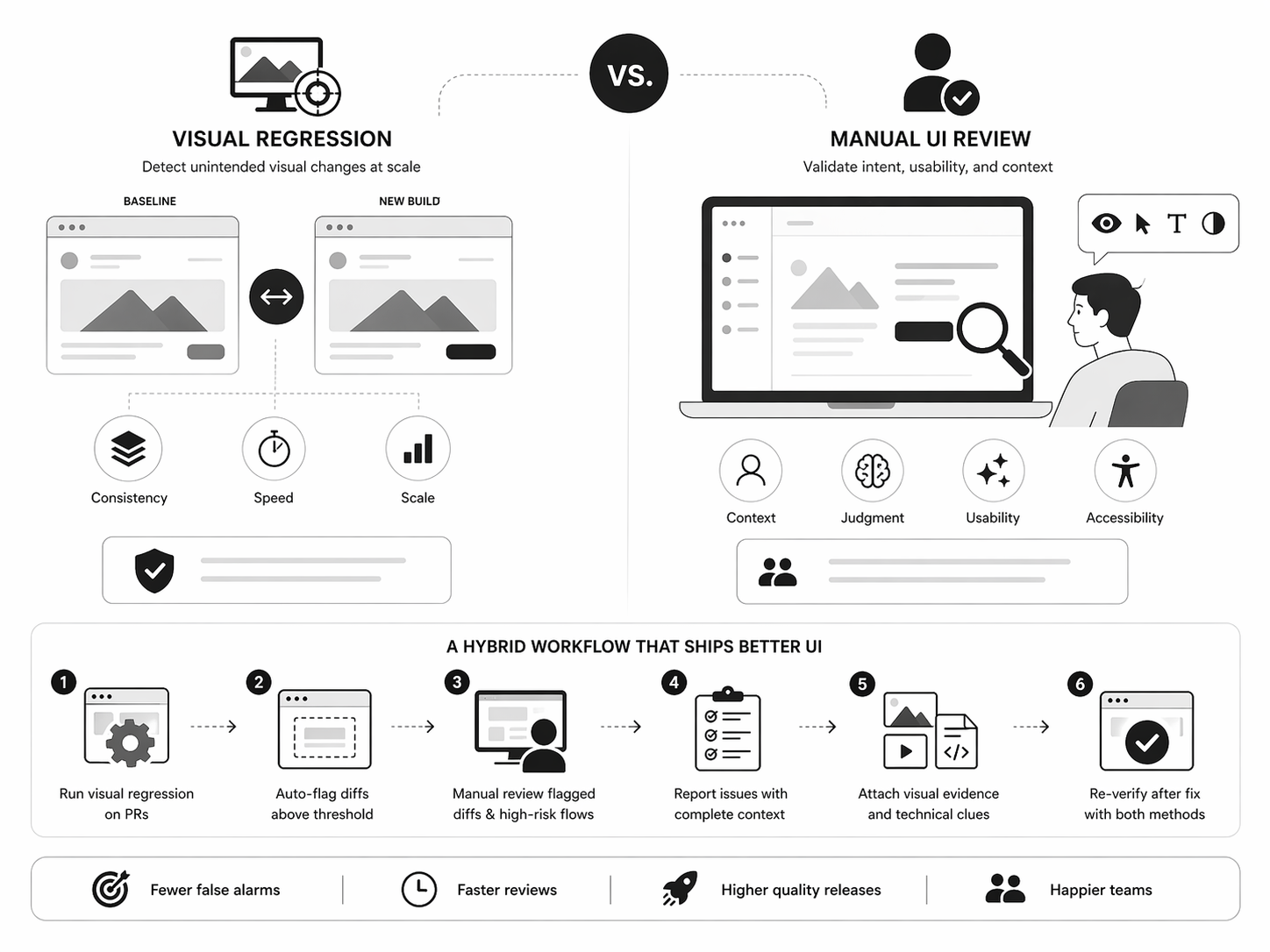

Visual Regression vs Manual UI Review: What to Use When

Teams often treat visual regression testing and manual UI review as competing approaches. They are not. They solve different problems at different stages of product delivery.

The better question is not "Which is better?" It is "Which one should we use for this type of risk?"

Why this decision matters

Most UI delays are not caused by writing code. They are caused by review churn:

- "Looks off on my screen."

- "Can't reproduce."

- "It passed tests, but still feels wrong."

- "This changed on one breakpoint and broke another."

This happens when teams rely on a single review method for every UI problem. Visual bugs are diverse, so your review strategy should be too.

What visual regression is good at

Visual regression testing compares screenshots between builds and flags unexpected differences. It is excellent at scale and consistency.

Use it when you need to:

- monitor reusable components across many pages

- detect CSS and layout regressions after dependency or token updates

- enforce consistency in design-system-heavy interfaces

- add fast PR guardrails for critical flows

What manual UI review is good at

Manual review validates what automated diffs cannot fully judge: intent, usability, and context.

Manual review is best for:

- new or evolving features

- complex interaction states (hover, focus, loading, empty, error)

- accessibility quality checks beyond simple pass/fail, including contrast validation on the live UI

- product decisions requiring design judgment

Automation can tell you that pixels changed. Humans tell you whether that change is good.

The practical framework: what to use when

- Use visual regression for broad, repeatable, high-volume drift detection.

- Use manual UI review for quality, intent, and user-facing nuance.

- Use both for release candidates and high-impact user journeys.

Think in layers:

- Automated guardrails

- Human validation

- Structured bug reporting

A hybrid workflow that works

- Run visual regression on pull requests for shared components and critical pages.

- Auto-flag diffs above a sensible threshold to reduce noise.

- Manually review flagged diffs and high-risk flows at key breakpoints and states.

- Report issues with expected vs actual, environment details, and reproducible evidence,often using a recording-backed bug report when playback clarifies the repro.

- Re-verify after fix using both methods.

Final take

Visual regression and manual UI review are not substitutes. They are complementary controls for different types of risk.

Teams that ship UI faster do not pick one method and hope for the best. They build a layered review process that catches drift early, validates quality thoughtfully, and makes every bug report actionable.